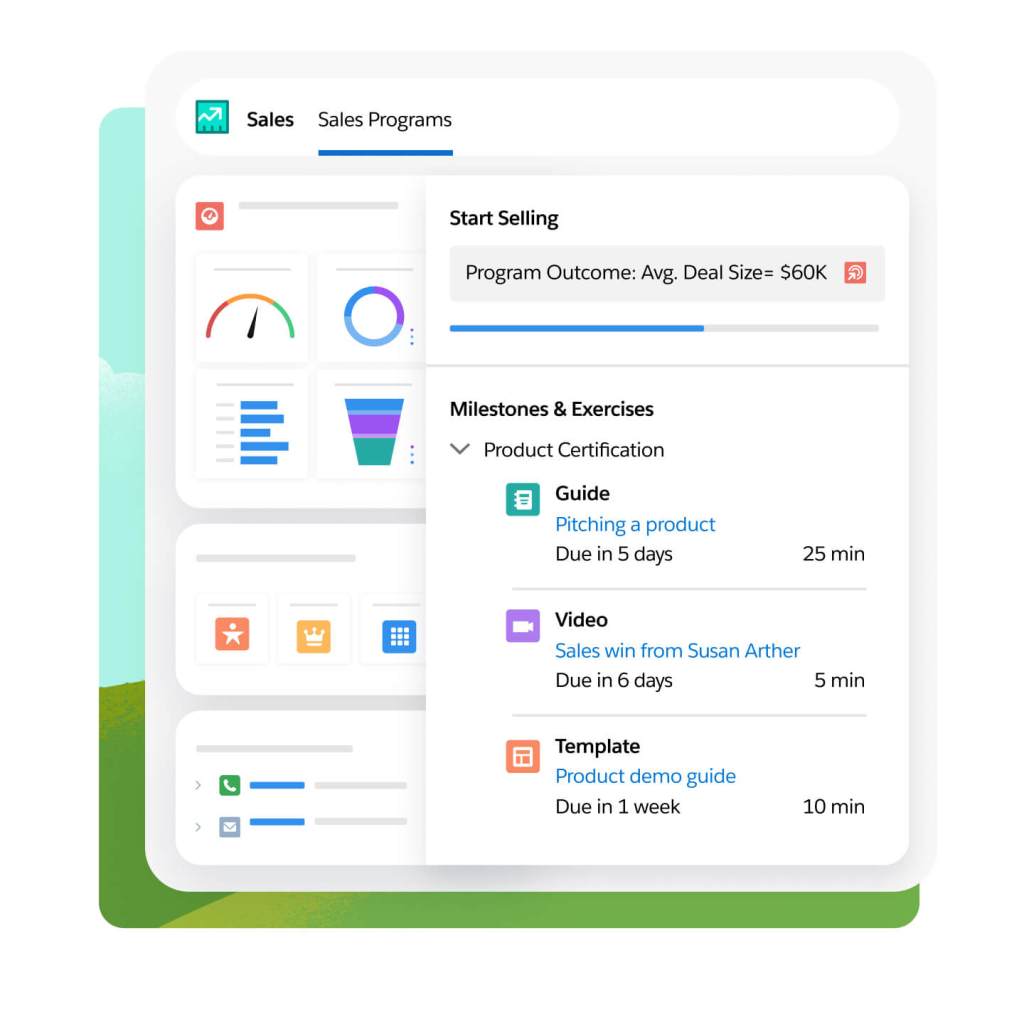

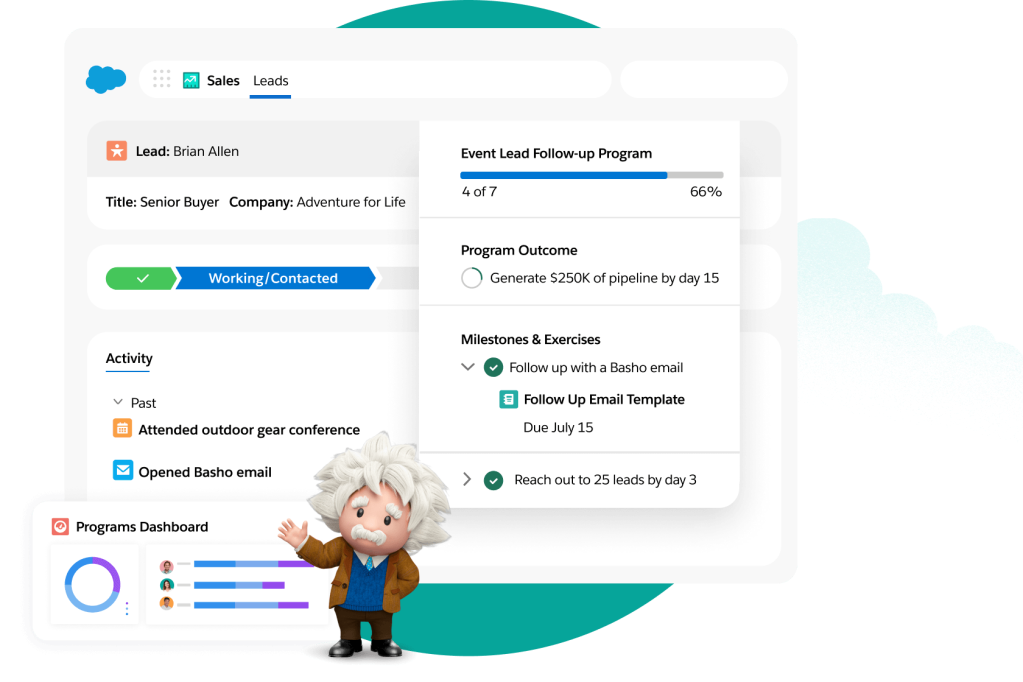

Sales Programs

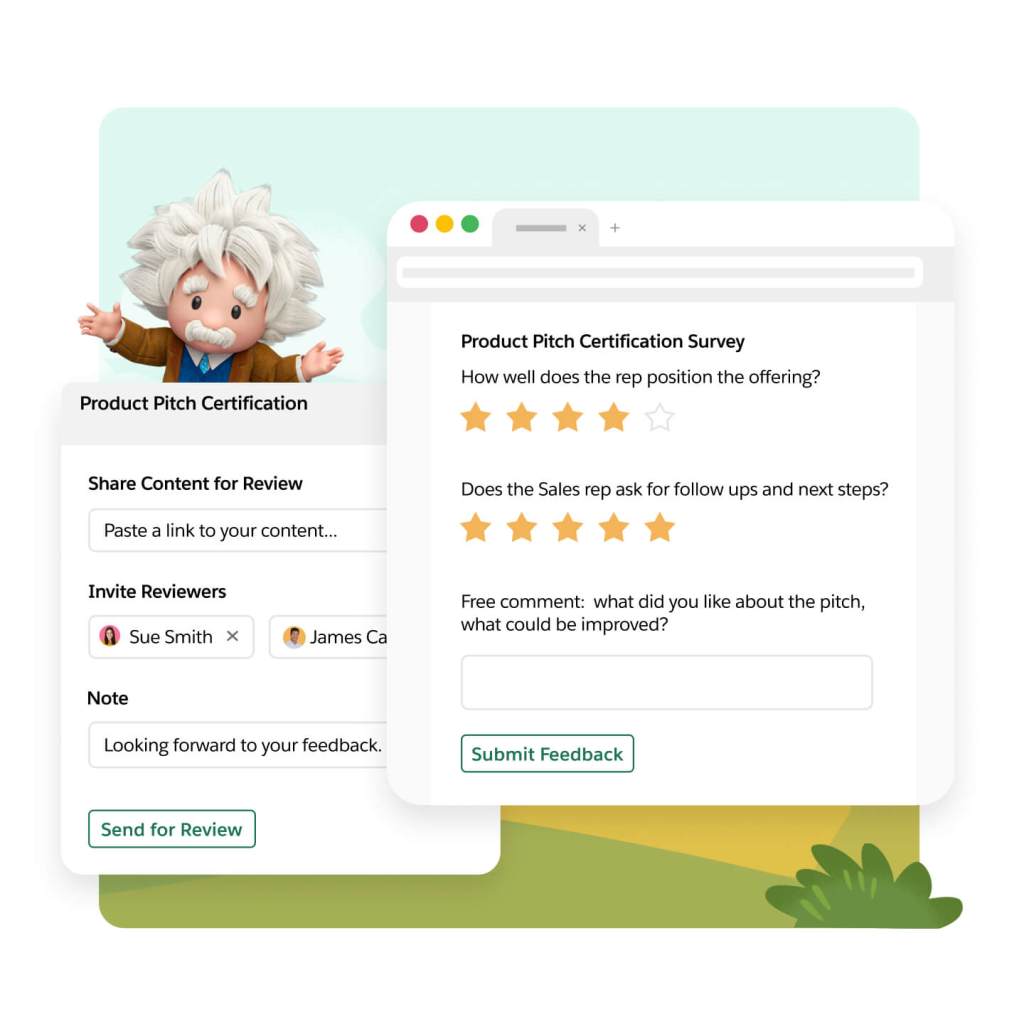

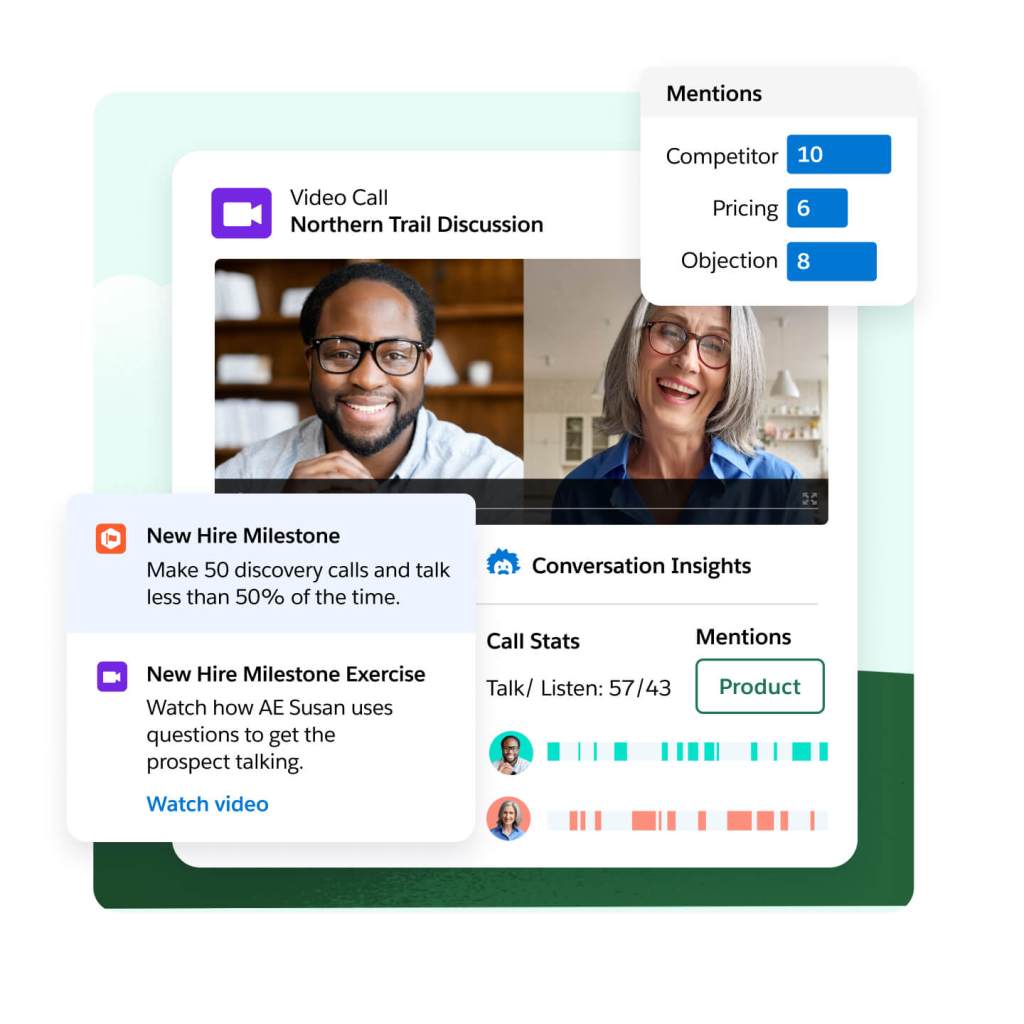

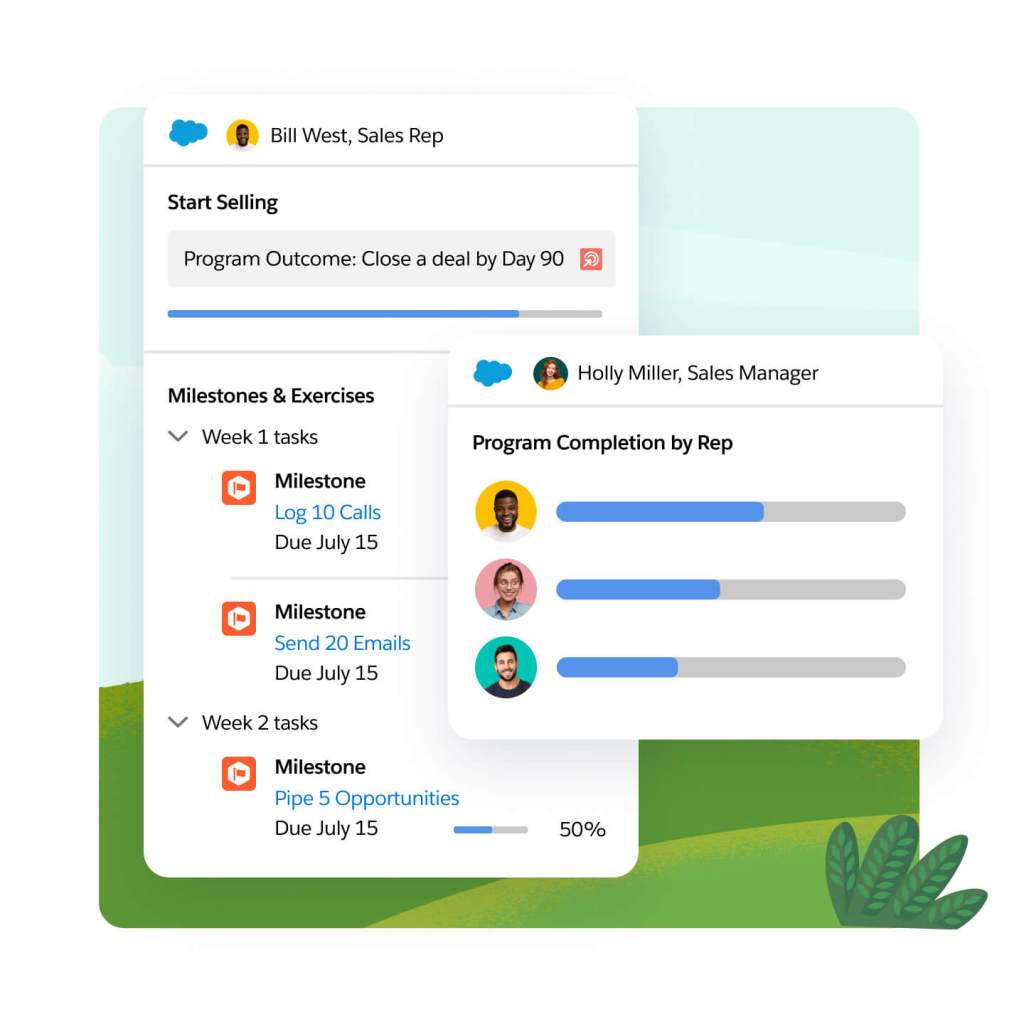

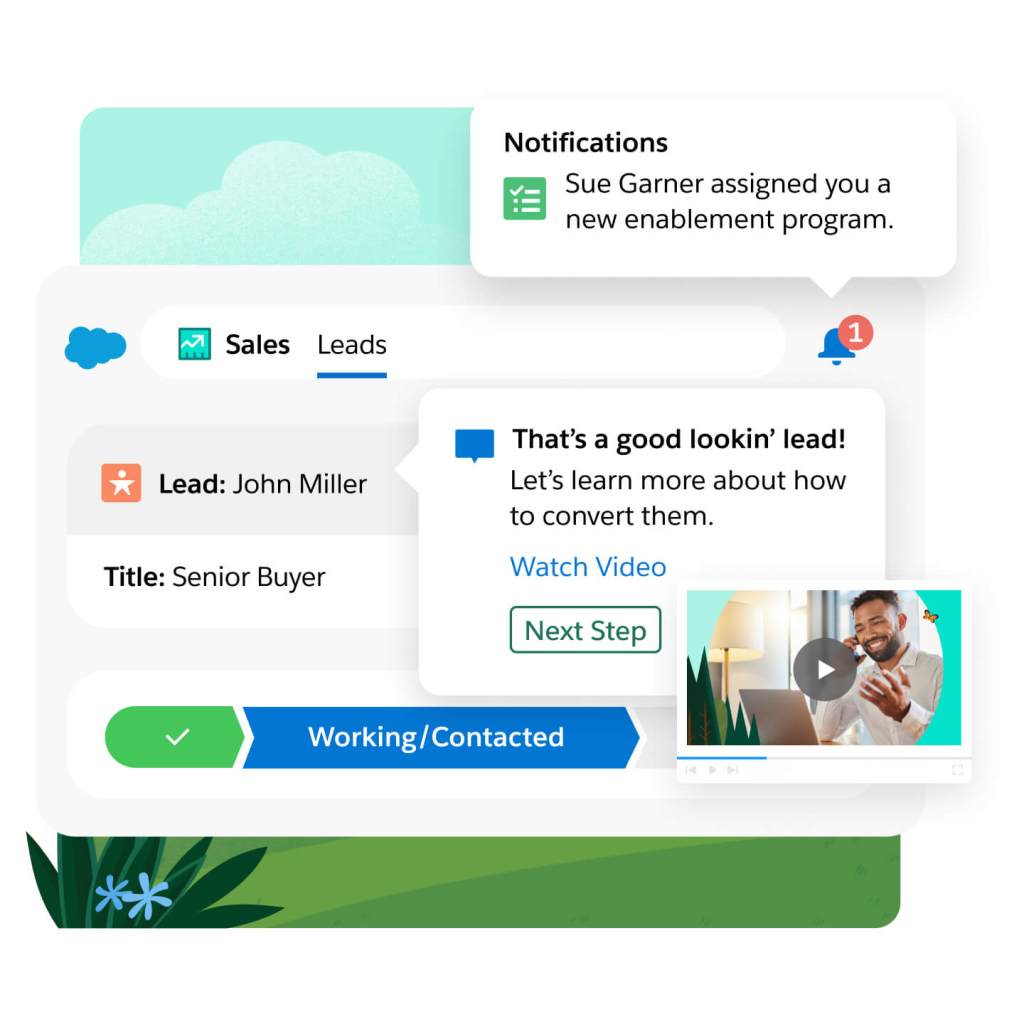

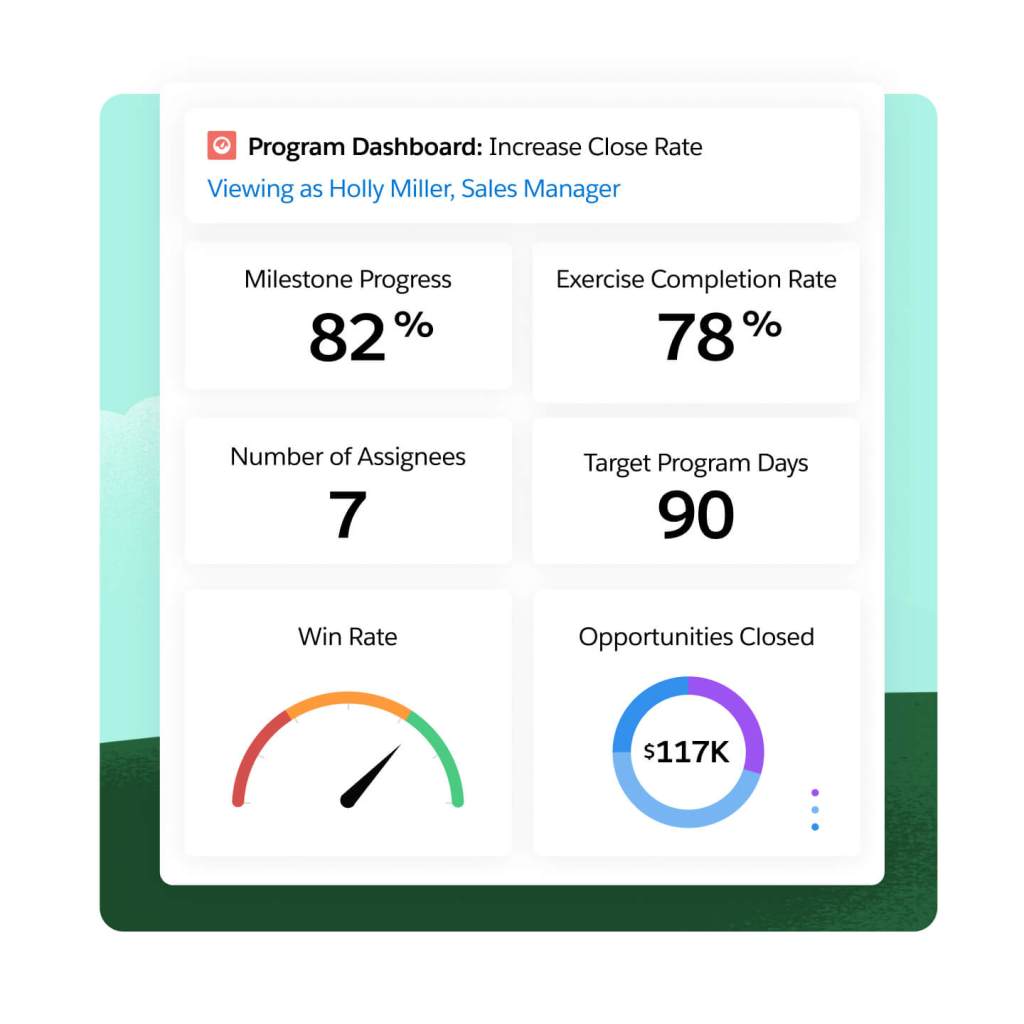

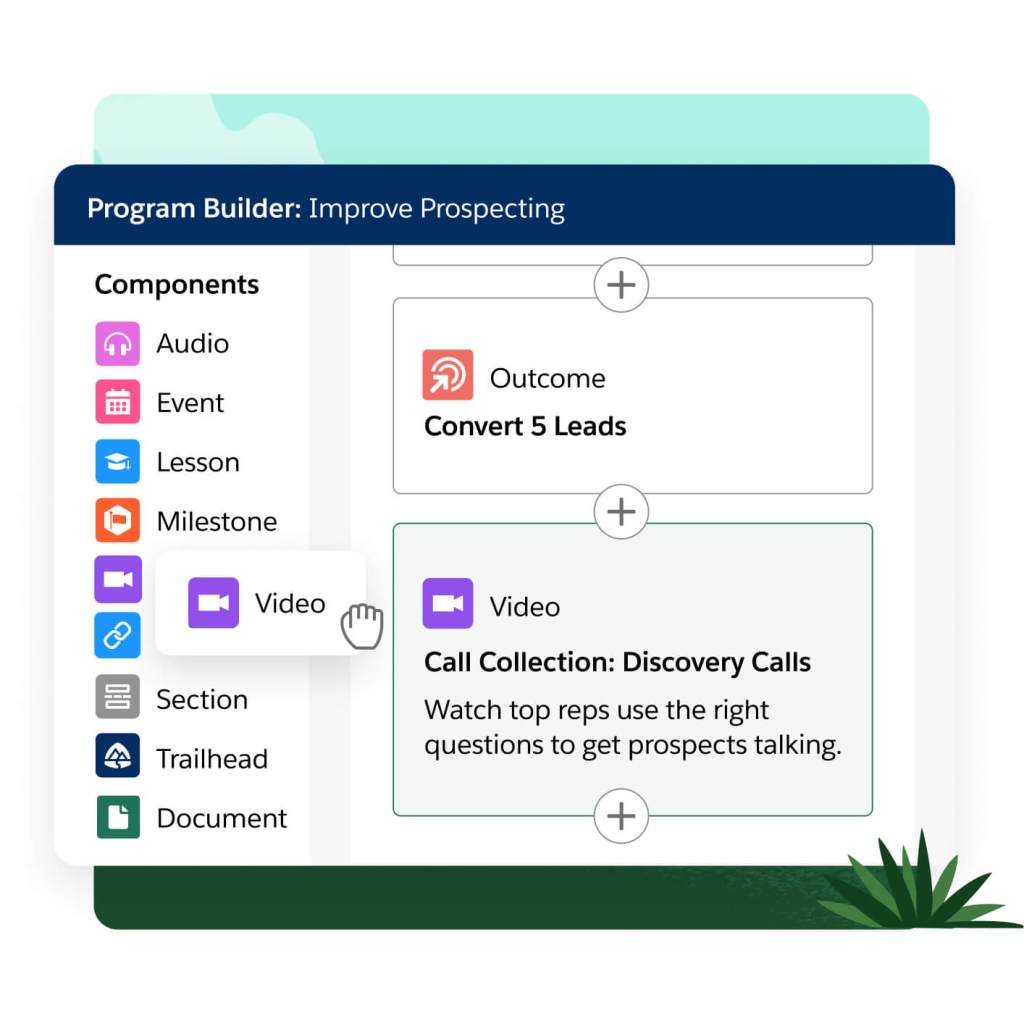

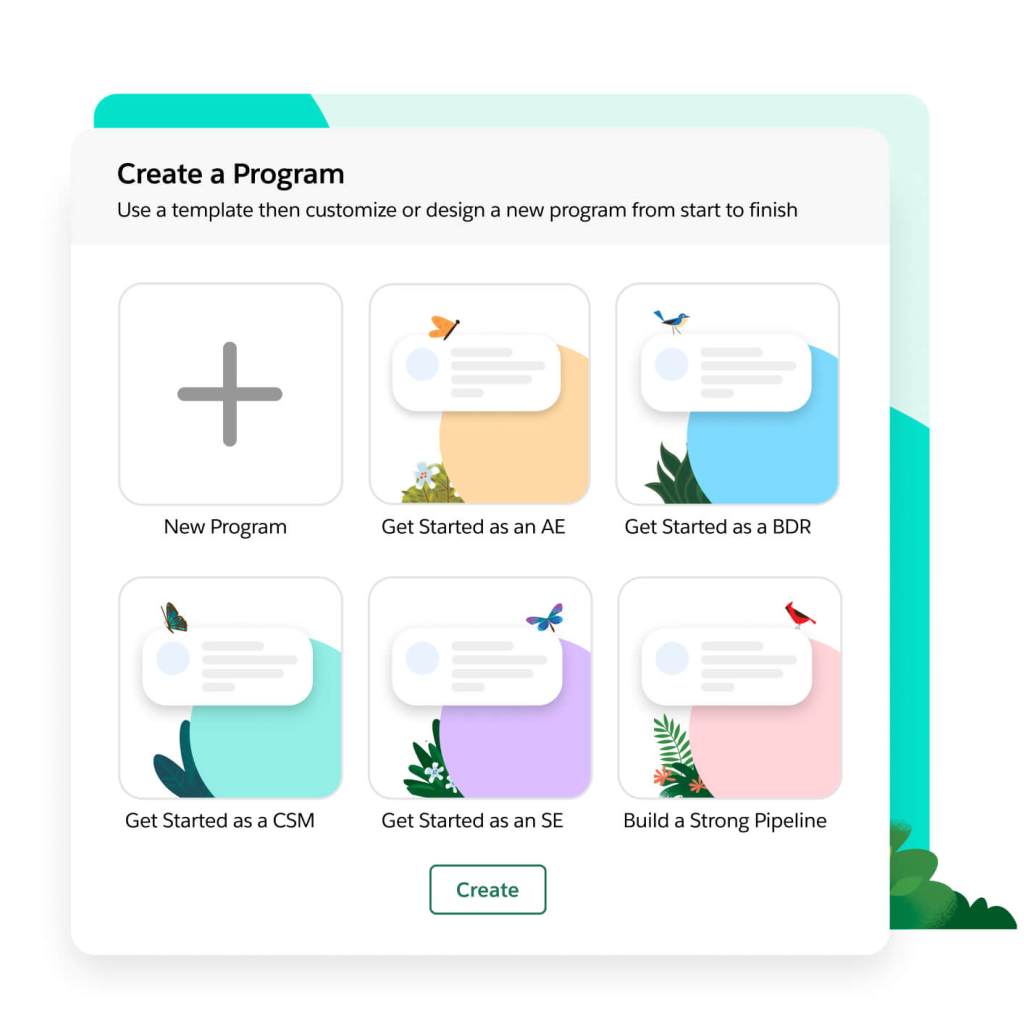

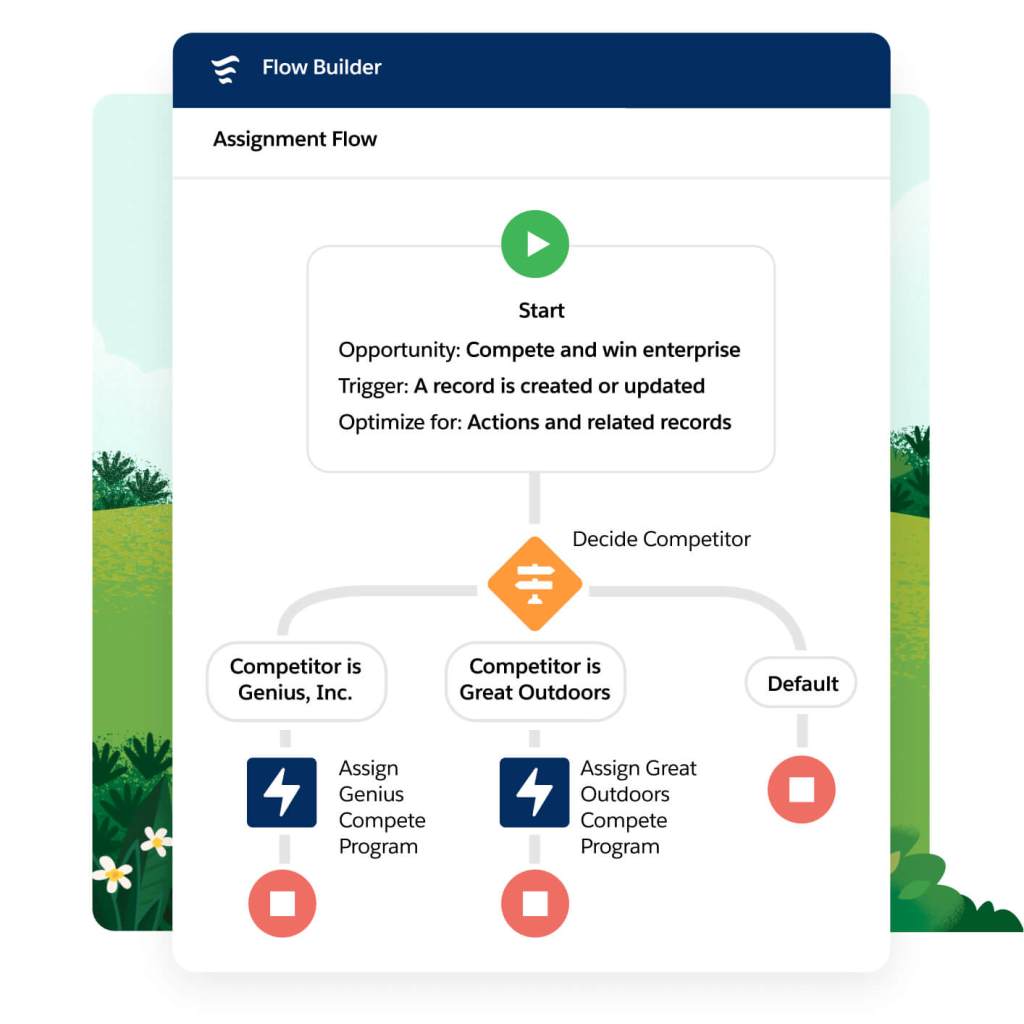

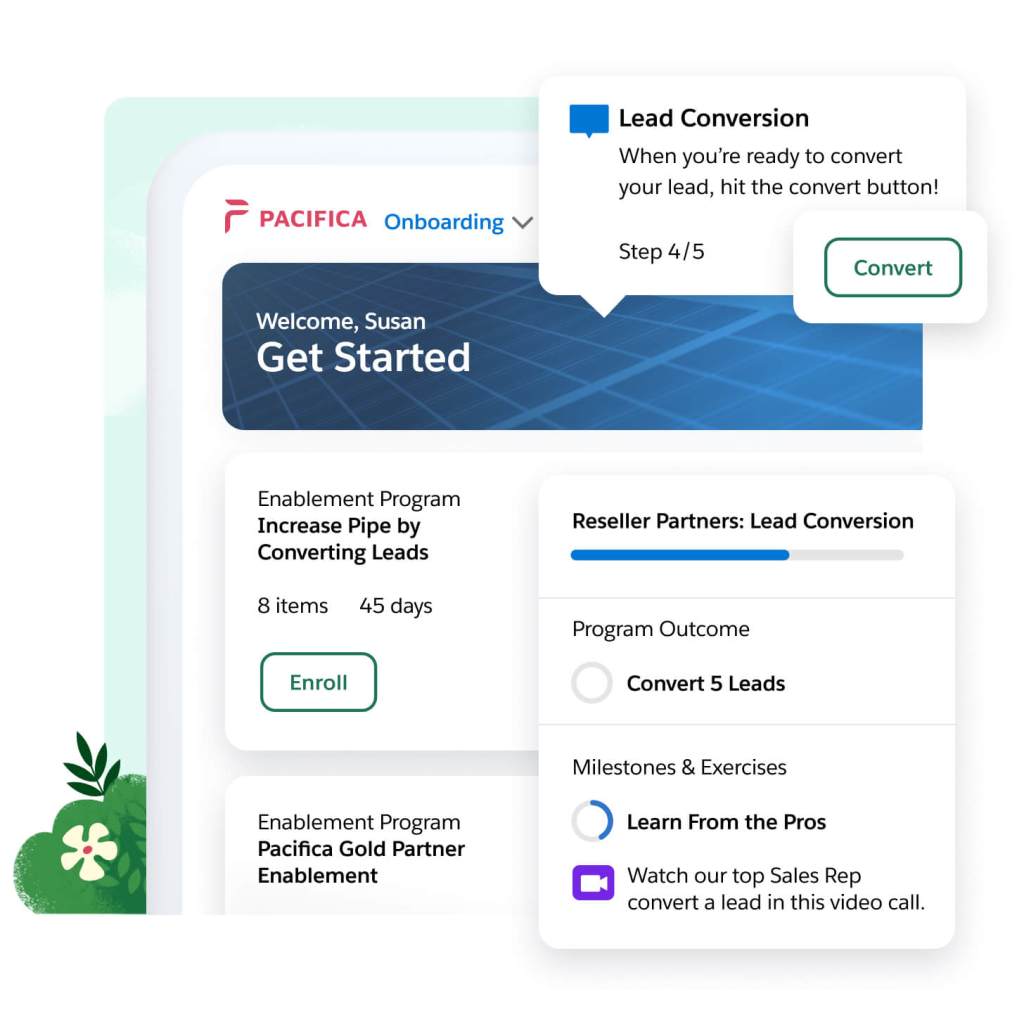

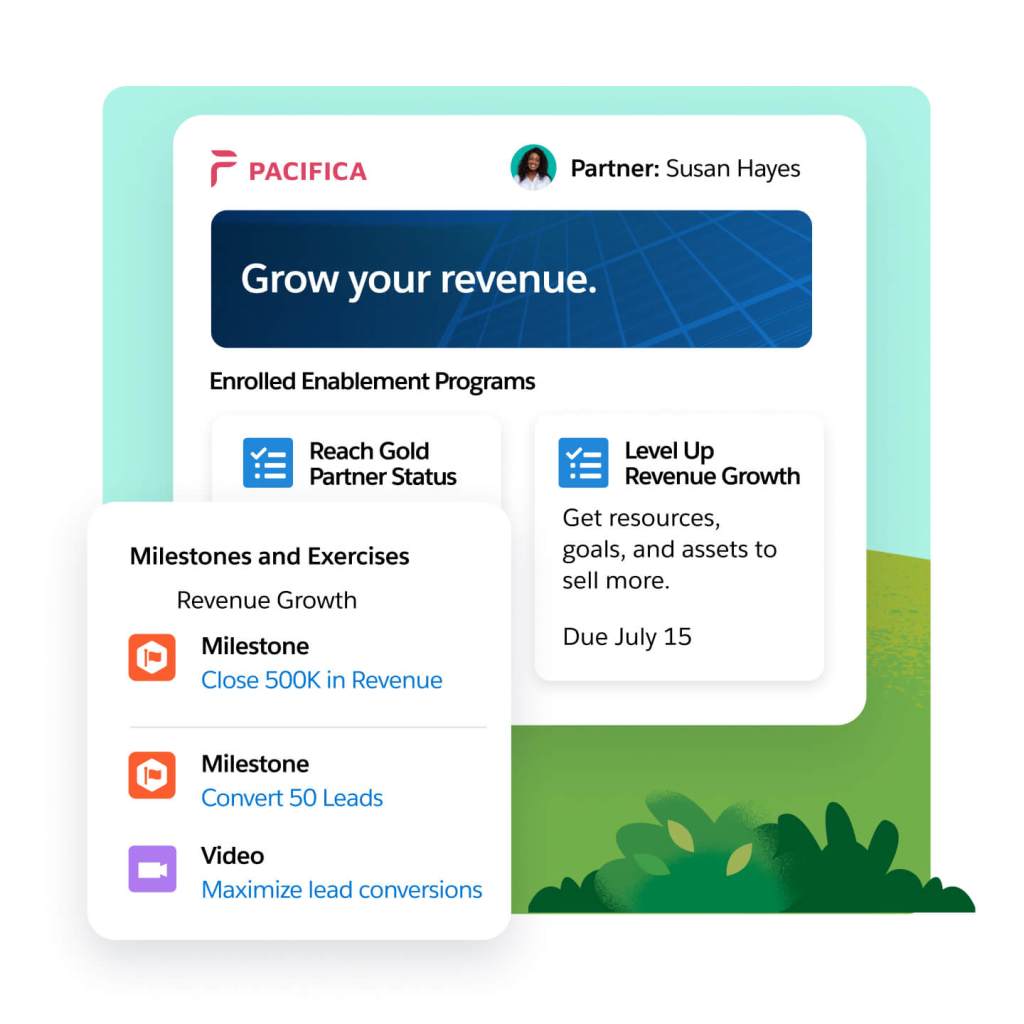

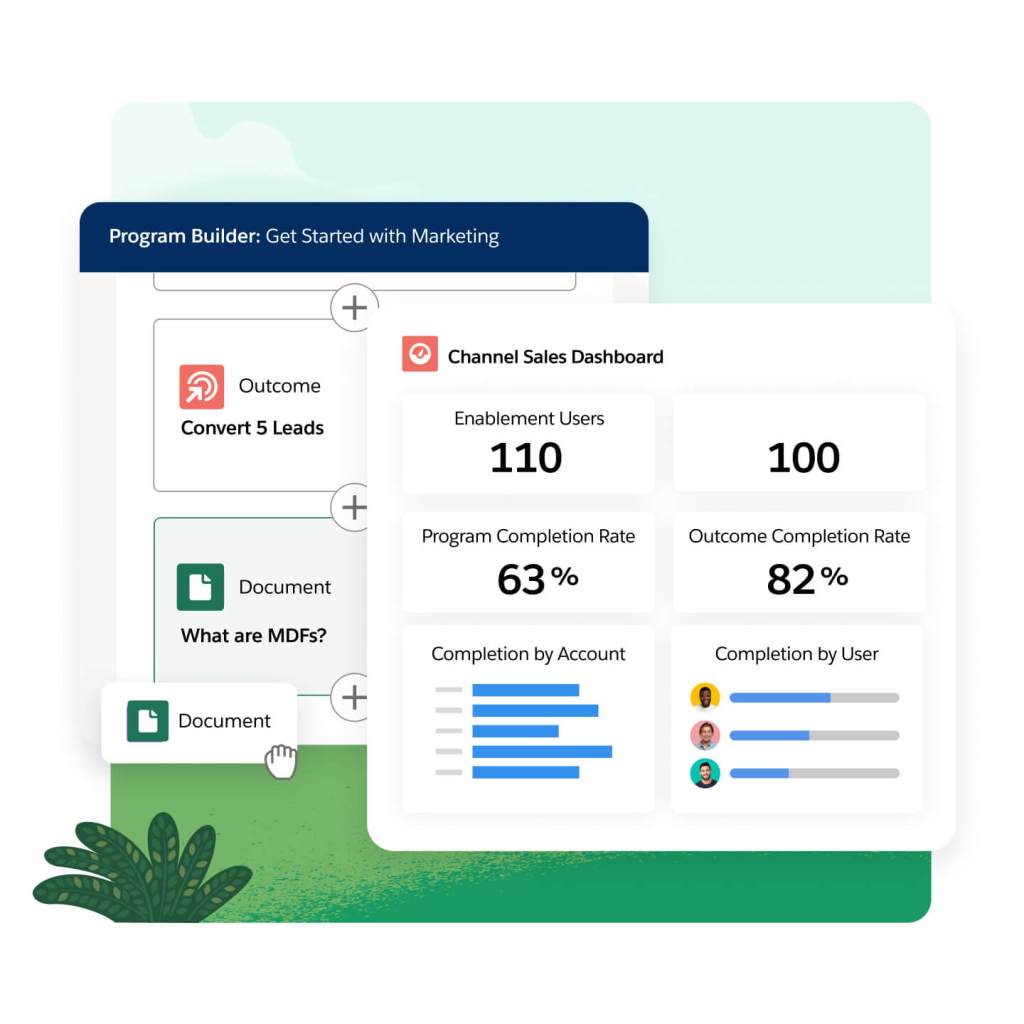

Sell faster with Sales Programs, built into CRM. Guide sellers to success with AI powered coaching and relevant resources — surfaced in the flow of work. Improve seller productivity with programs connected to rep activity and sales results. Deliver value quickly with best practice templates, and intelligent, automated program delivery with no code required.